My last few blogs focused on MSPs going to market with AI services and the common challenge of knowing where to start. While Breach Secure Now has built a full success path to help MSPs build, sell, and deliver AI services, one area can (and should) be addressed immediately: AI risk.

AI risk is already a growing threat to SMBs, and it shows up in two ways:

- Risk inside the business

- Risk outside the business

Both matter, but this series focuses on breaking them down into clear, actionable pieces based on what MSPs are seeing in the field.

This three-part series covers:

- Part 1: AI risk inside the business

- Part 2: AI risk outside the business (spoiler: cyber criminals 😨)

- Part 3: A high-impact strategy to reduce AI risk while driving real productivity and ROI 💸

Let’s start inside the business.

Employees Are Already Using AI

Inside roughly 90% of companies today, employees are already using AI tools. That’s not surprising. Tools like ChatGPT and Gemini are everywhere, and AI is now embedded in everyday business software and search results. People are quickly realizing how powerful and helpful, these tools can be.

So where’s the risk?

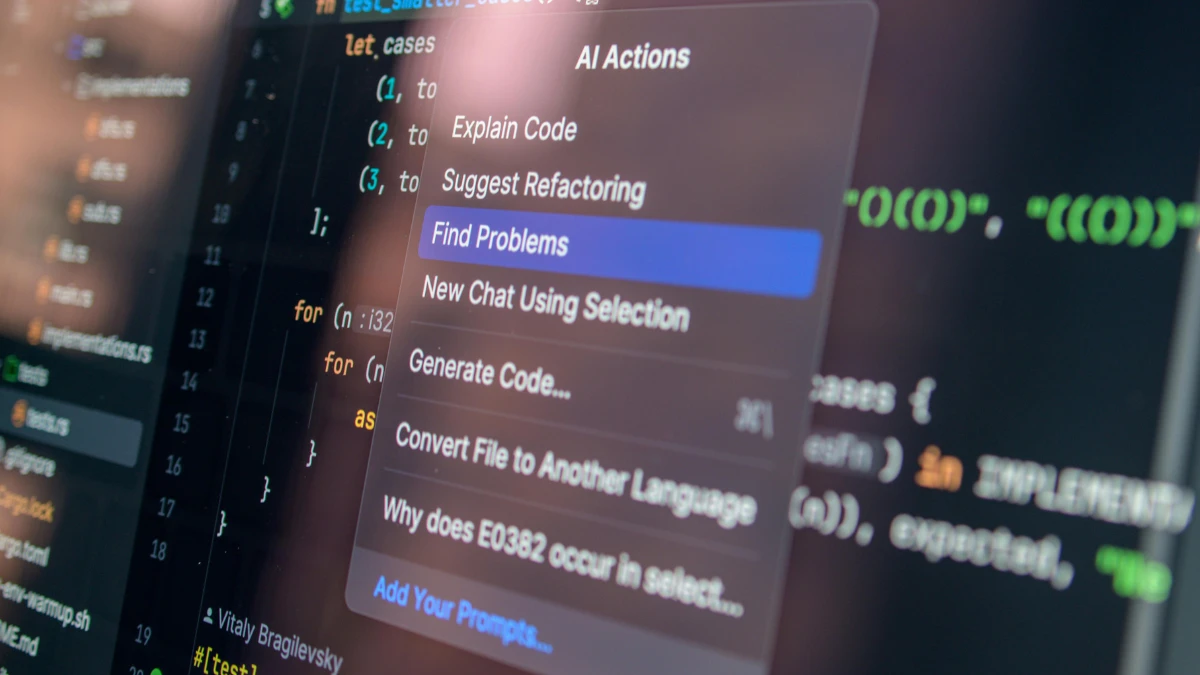

While over 90% of companies report employees using personal AI tools for work, only about 40% have purchased approved or secure AI solutions. That gap drives Shadow AI: employees turning to free, unapproved tools, often without leadership realizing it. In some organizations, hundreds of AI tools are in use without oversight. (Source: State of AI in Business 2025 Report, MLQ.ai)

When employees use free AI tools and enter business data, that information may be used to train the model. OpenAI confirms that conversations in consumer versions of ChatGPT (Free and Plus) can be used for training unless users opt out. That means sensitive data can persist inside these models and potentially be exposed later.

As BSN’s AI Solutions Strategist Lindsay Hays puts it:

“Nothing is free in life. If it claims to be free, you are the product.”

That’s Shadow AI risk in a nutshell.

A Simple Example

Jim works in finance and is racing to finish month-end close. To save time, he pastes a spreadsheet with revenue data, vendor payments, and acquisition notes into a free AI tool and asks for help reconciling discrepancies.

He gets a clean summary in seconds. It feels like a win.

What Jim doesn’t realize is that he just uploaded sensitive financial data into a consumer AI tool his company hasn’t approved or secured.

Jim didn’t do anything malicious. He saved time and likely improved his output. Most employees don’t even realize this is risky; and that’s exactly the problem.

This is the first story MSPs should be telling: lead with the data, then make it real.

Some MSPs are already uncovering Shadow AI by using DNS scans inside client environments. Seeing real tools and real data exposure makes these conversations much easier and far more impactful.

The Second Internal AI Risk: Trusting the Output

There’s another internal AI risk that shows up after adoption begins: over trusting AI outputs.

If you’ve used AI, you’ve seen it: confident, polished answers that are completely wrong. These errors, known as hallucinations, aren’t bugs. They’re a known behavior of these models, which is why training and verification matter.

I saw this firsthand when BSN began adopting AI. Employees would prompt, copy the answer, and move on. That leads to one of two bad outcomes:

- Poor-quality work makes it through unchecked, or

- The employee catches errors, decides AI is “dumb,” and stops using it entirely

Both outcomes hurt the business.

Back to Jim: if he trusted the output without verifying it, a mission-critical financial report could be wrong and potentially disastrous.

This is the second story MSPs should be telling. It’s not about banning AI. It’s about setting the right expectations so employees use it correctly.

(Read more: Where AI Meets Cybersecurity: A Practical Starting Point for MSPs)

When expectations are wrong, quality drops, trust erodes, and adoption stalls.

Why This Matters for MSPs

These risks are real, and nearly every company is facing them, whether they know it or not. If you aren’t having these conversations with your clients, someone else will.

The good news? This is already your lane. Clients trust MSPs to identify risk before it becomes a problem, especially when it comes to cybersecurity.

MSP Talking Points for Your Next QBR

- Shadow AI is already happening: 90%+ of companies report employees using personal AI tools, while only ~40% have approved solutions in place. (Source: State of AI in Business 2025 Report – MLQ.ai)

- Free AI tools expose sensitive data: In 2023, Samsung discovered employees had pasted proprietary source code and internal documents into ChatGPT, prompting a company-wide ban on public AI tools. (Source: Legal Dive)

- AI hallucinations are causing real damage: Legal analysts have documented 120+ court cases where AI-generated content introduced fake citations or factual errors, leading to sanctions and dismissed filings. (Source: Business Insider)

Use these examples to show clients that AI risk isn’t theoretical—it’s already happening inside real businesses, driven by good intentions and productivity pressure.

Internal AI risk is only half the story.

(Read more: Meeting AI with AI: How MSPs Can Stay Ahead of Evolving Cyber Threats)

In Part 2, we’ll cover external AI risk, how cyber criminals are using AI, and why social engineering attacks are getting harder to spot.

And if you hate cliffhangers, our AI go-to-market team can help you start these conversations with clients today.

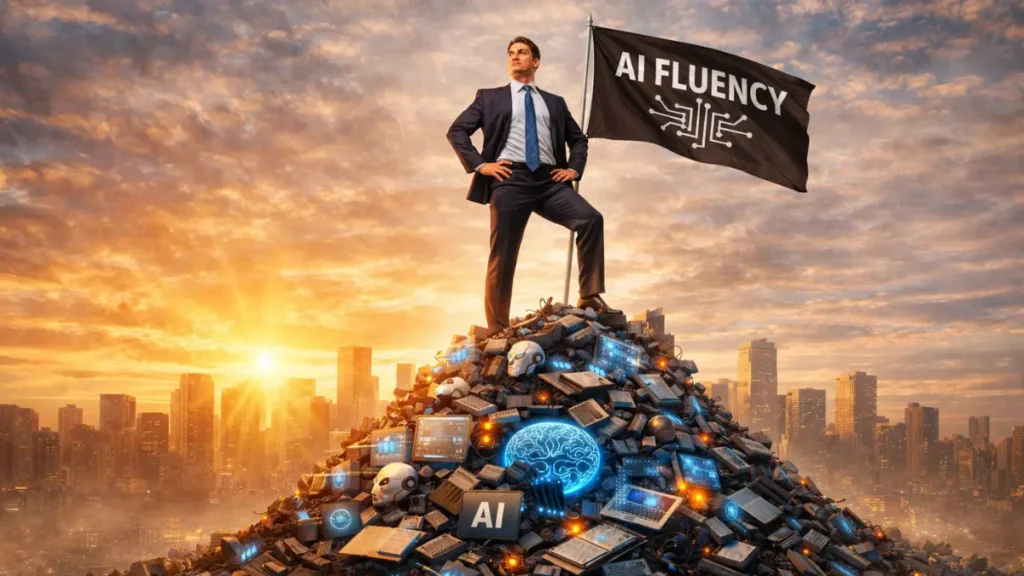

Now Available: Gen AI Certification From BSN

Lead Strategic AI Conversations with Confidence

Breach Secure Now’s Generative AI Certification helps MSPs simplify the AI conversation, enabling clients to unlock the value of gen AI for their business, build trust, and drive growth – positioning you as a leader in the AI space.