AI tools like ChatGPT, Claude, and Gemini are showing up in workplaces everywhere. Not because companies rolled them out, but because employees discovered them.

An employee pastes a customer contract into ChatGPT to summarize it. A manager uploads meeting notes to generate action items. A developer asks AI to debug a piece of internal code.

None of these actions feel risky. In fact, they’re exactly why these tools are exploding in popularity. They help people work faster, but there’s a growing issue many businesses don’t see yet.

When employees paste company data into public AI tools, that information may be leaving your organization’s control.

This problem has a name: Shadow AI. And it’s already widespread.

A recent study found that over 70% of employees are using AI tools at work, and many of them are doing it through personal accounts that IT teams cannot monitor.

That means sensitive business data may be flowing into systems your company doesn’t control.

What Is Shadow AI?

Shadow AI happens when employees use AI tools for work without company oversight or security controls. That might include tools like:

- ChatGPT

- Claude

- Gemini

- AI writing assistants

- AI coding tools

Most employees aren’t trying to break rules. The reality is most companies haven’t created rules yet, so employees experiment, test tools, then try to become more productive. And in many cases, they are.

The issue is that these tools often sit outside your company’s technology stack, which means IT and security teams have no visibility into what data might be shared with them. And that’s where things start to get risky.

5 Real Incidents That Show the Risk

All of these incidents have something in common: Employees weren’t trying to leak company information; they were simply trying to do their jobs more efficiently:

-

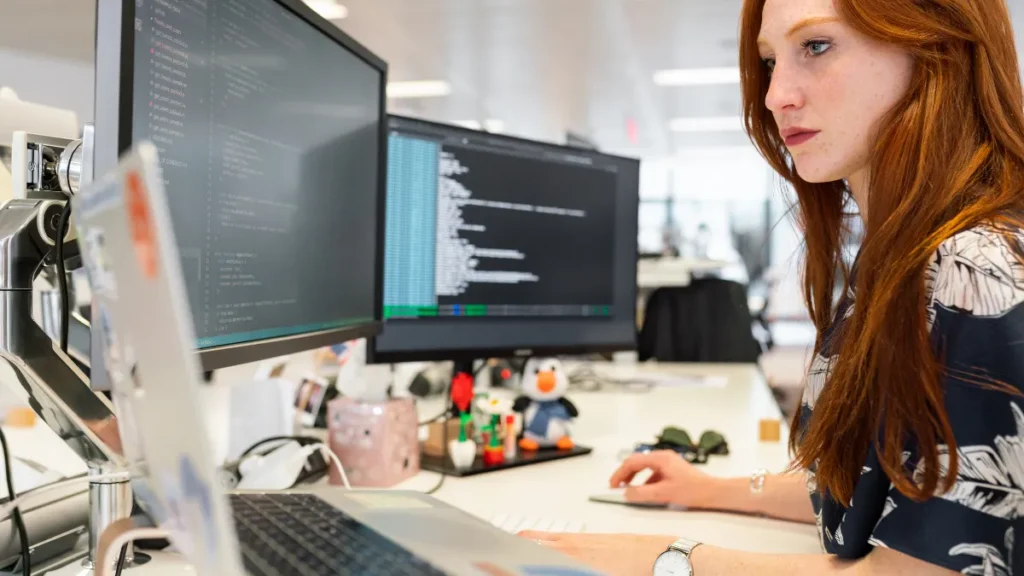

Samsung Engineers Leak Semiconductor Source Code

One of the most well-known Shadow AI incidents happened at Samsung in 2023.

Engineers were using ChatGPT to help debug code and optimize processes.

In three separate incidents, employees pasted confidential semiconductor source code and internal meeting notes into the chatbot.

They were simply looking for help solving technical problems.

But the moment that code was entered into a public AI system, it left Samsung’s internal environment.

The company later restricted generative AI usage after the leaks were discovered.

The takeaway is simple.

Even highly technical teams can unintentionally expose intellectual property when AI tools are used without guardrails.

-

Government Contractor Uploads Flood Victims’ Personal Data

In 2025, a contractor working with the New South Wales Reconstruction Authority uploaded a spreadsheet into ChatGPT while reviewing disaster recovery applications.

The file contained personal information from around 3,000 flood victims, including names, contact details, and health information.

The spreadsheet reportedly contained over 12,000 rows of data from the Northern Rivers Resilient Homes Program.

The contractor was trying to speed up the review process.

Instead, the upload triggered a government investigation and new policies around AI use.

This is a good reminder that AI tools and sensitive personal data can be a dangerous combination when safeguards aren’t in place.

-

Healthcare Workers Entering Patient Information into AI Tools

Healthcare organizations are increasingly seeing employees use AI tools to summarize patient notes and assist with documentation.

The challenge is that many public AI tools are not HIPAA-compliant and do not provide required Business Associate Agreements.

When protected health information (PHI) is entered into those systems, it can create significant privacy and compliance risk.

Several healthcare organizations have since issued warnings or restricted AI usage after discovering patient data being entered into public AI systems.

Again, the employees involved were not acting maliciously.

They were trying to reduce administrative workload and move faster.

But without guidance, it creates serious risk.

-

ChatGPT Bug Exposed Other Users’ Conversations

In March 2023, OpenAI temporarily shut down ChatGPT after a bug exposed parts of other users’ chat histories.

Some users were able to see titles of conversations created by other people due to an issue in the platform’s open-source library. (OpenAI incident disclosure)

Even though the problem was fixed quickly, the incident highlighted an important reality.

Once data is submitted to an AI platform, organizations may lose control over how that information is stored, processed, or potentially exposed.

-

Stolen ChatGPT Credentials Expose Prompt Histories

Security researchers have also discovered hundreds of thousands of ChatGPT login credentials being sold on dark web marketplaces after being stolen by infostealer malware.

When attackers gain access to those accounts, they can view entire conversation histories. Which may include prompts containing:

- Internal documents

- Source code

- Financial information

- Business strategy discussions

If employees are using personal AI accounts for work tasks, those accounts can become another entry point for attackers.

Why This Is Happening

Here’s the important thing to understand. This isn’t a malicious insider problem. It’s a productivity problem. Employees are discovering tools that help them:

- Write faster

- Summarize information

- Analyze documents

- Generate ideas

- Debug code

And they’re getting real value from them. The problem is most businesses haven’t provided guidance on how to use AI safely. So employees fill the gap themselves.

If It’s Free, You Are the Product

There’s a simple rule that has existed on the internet for years. If it’s free, you are the product. Many free AI tools use user inputs to improve their models. That means the data entered into these systems may be stored, analyzed, or used for training.

For businesses, that can create serious exposure if employees paste sensitive information into those tools.

The Goal Shouldn’t Be to Ban AI

Blocking AI tools entirely usually doesn’t work. Employees will still find ways to use them. The better approach is to put guardrails in place so teams can use AI safely. That includes:

- Creating a clear AI usage policy

- Training employees on responsible AI use

- Defining what company data should never be entered into AI tools

- Evaluating secure AI solutions designed for business use

The companies that benefit most from AI won’t be the ones that ban it. They’ll be the ones that govern it.

AI can absolutely improve productivity. But without clear guidance, it can also create new ways for sensitive data to leave your organization.

Start by helping employees understand how to use AI responsibly. Security awareness training and clear AI usage policies can help your team take advantage of AI while protecting company data.

Now Available: Gen AI Certification From BSN

Lead Strategic AI Conversations with Confidence

Breach Secure Now’s Generative AI Certification helps MSPs simplify the AI conversation, enabling clients to unlock the value of gen AI for their business, build trust, and drive growth – positioning you as a leader in the AI space.